Subscribe for more!

Subscribe to our newsletter for insights and articles on wide ranging issues including reputation management, branding, advertising, awareness, advocacy, and communications. You can unsubscribe anytime.

Follow us on social:

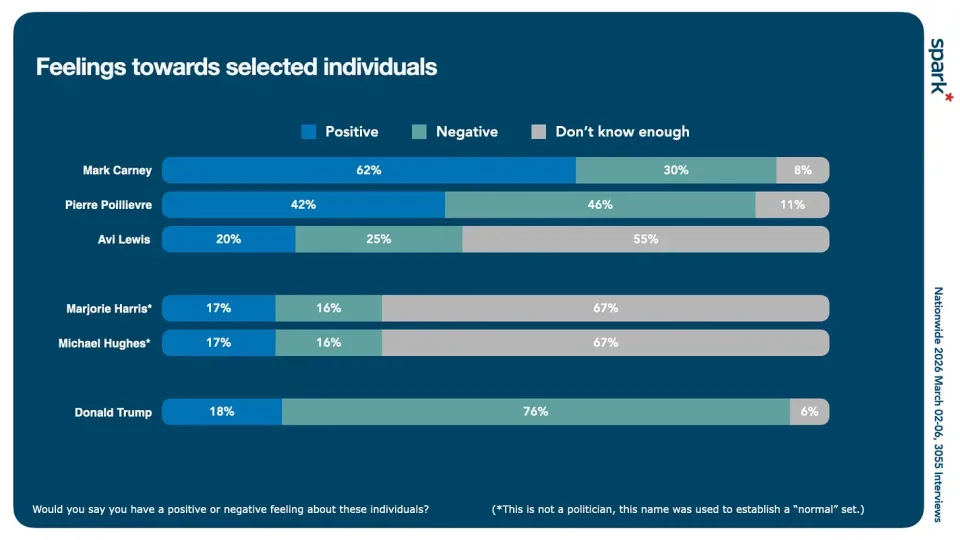

Marjorie Harris and Michael Hughes are deadlocked, with net favourability scores of +1. The thing is, they are made up names for an experiment.

Ever since I started working in public opinion research (back in the early 1980’s) I was keen to know about the sources of bias that can creep into research unless care is taken in survey design.

One of those was the “acquiescence bias”, sometimes referred to in shorthand terms as yea-saying. It reflects that fact that some people - especially if they don’t have a strong opinion themselves are more likely to agree with a statement or idea than disagree with it. It’s something one always has to take into account when doing polling, especially on matters where public opinion might be only lightly formed.

Lately I’ve become focused on a different thing that we see in research - which is that a certain number of people prefer to manufacture an opinion than to say they don’t have one. This is less studied phenomena than yea-saying or nay-saying bias.

To shed some light on this, in a large national survey a couple of weeks ago (3055 cases, online nationwide, March 2-6, 2025) I asked respondents to give me a favourability rating for a lengthy list of political figures, including Canadian leaders and some Cabinet Ministers, and senior Conservatives, as well as Donald Trump.

I included two made up names in the list: Marjorie Harris and Michael Hughes.

Equal numbers of people like and dislike Harris and Hughes.

So, a total of a third of the sample came up with an opinion about a politician that they didn’t know anything about, instead of choosing the answer “don’t know enough about them to have an opinion”.

I wanted to find out as much as I could about those who made up an answer, compared to those who said they didn’t know. And to compare those who were “likers” with those who were “dislikers”.

Finally, I wanted to know what including the responses of these respondents in our reporting meant in terms of introducing any element of unreliability to the findings.

The people who rated the phantom names were:

There are strong patterns among those who like or dislike the phantom names and how they rate real politicians.

As a general rule, if you liked the Harris or Hughes you were more likely to like the real politicians, and vice versa. It is connected to, but not hard wired to, political partisanship. You can be a “disliker” and like Carney and a “liker” and dislike Trump. Likers like both Carney and Poilievre, but not to the same degree.

Does including those who create an instant like or dislike opinion have an effect on our voting intention data? Yes and no.

If we remove all those who had an opinion about the made up names from our sample, there was zero overall effect on voting intention. In both cases, our results were a 15 point lead for the Liberals at 45-30.

That’s not to say the likers and dislikers had no effect at all, but rather than the effects cancelled each other out. Among those who liked Michael and Marjorie, the Liberal lead was 19. Among those who disliked the phantom politicians, the Liberal lead was just 6 points.

So what does it all mean? In my view, people who make up an opinion on the spot are legitimate respondents, and their point of view on other variables deserves to be included in the samples. It’s good to keep an eye on variables like this to stress test the accuracy of data sets.

For people considering running for office, it’s a useful thing to know when you are measuring your public opinion standing, that the level-set is a total of 33% offering an opinion who don’t really know you and a net of even or close to even.

For those consuming polls to keep track of politics, it’s a reminder to consume with eyes wide open - if you are seeing reports that are highly declaratory based on describing a populace that is deeply attentive to politics, apply a filter in your mind. Remember that a third of people likely won’t vote, and that half of those who will vote are paying little attention to politics, most of the time.

spark*insights is led by Bruce Anderson, one of Canada’s leading and most experienced public opinion researchers. From polling and research to analysis and guidance, we help organizations, uncover the factors driving or influencing public perception to gain valuable insights into the shape and movement of the landscape.